More Mebe Benchmarks

Posted on .

You may remember that earlier this fall I found out just how much faster a 2048-bit HTTPS certificate is for the server to handle. Now that I got one from Let's Encrypt, I decided to redo the performance tests with the new certificate all set up. Since I ran out of credits on my blitz.io free account, I did the new tests with loader.io's free tier instead. That's why the graphs are a bit different this time.

Before I go into the HTTPS results, I will bring some context. You might remember that last time I got about 730 requests per second served over HTTPS with a 2048-bit key, and about 1380 requests per second for plain HTTP. Quoting myself from that time: "So fast… 🚀". Turns out I spoke too soon. By disabling some extraneous console logging, I was able to more than double the performance. Let's see the latest results.

For reference: The server is an online.net Dedibox XC with an 8-core Intel Atom C2750 processor and 8 GB of DDR3 RAM.

Plain HTTP

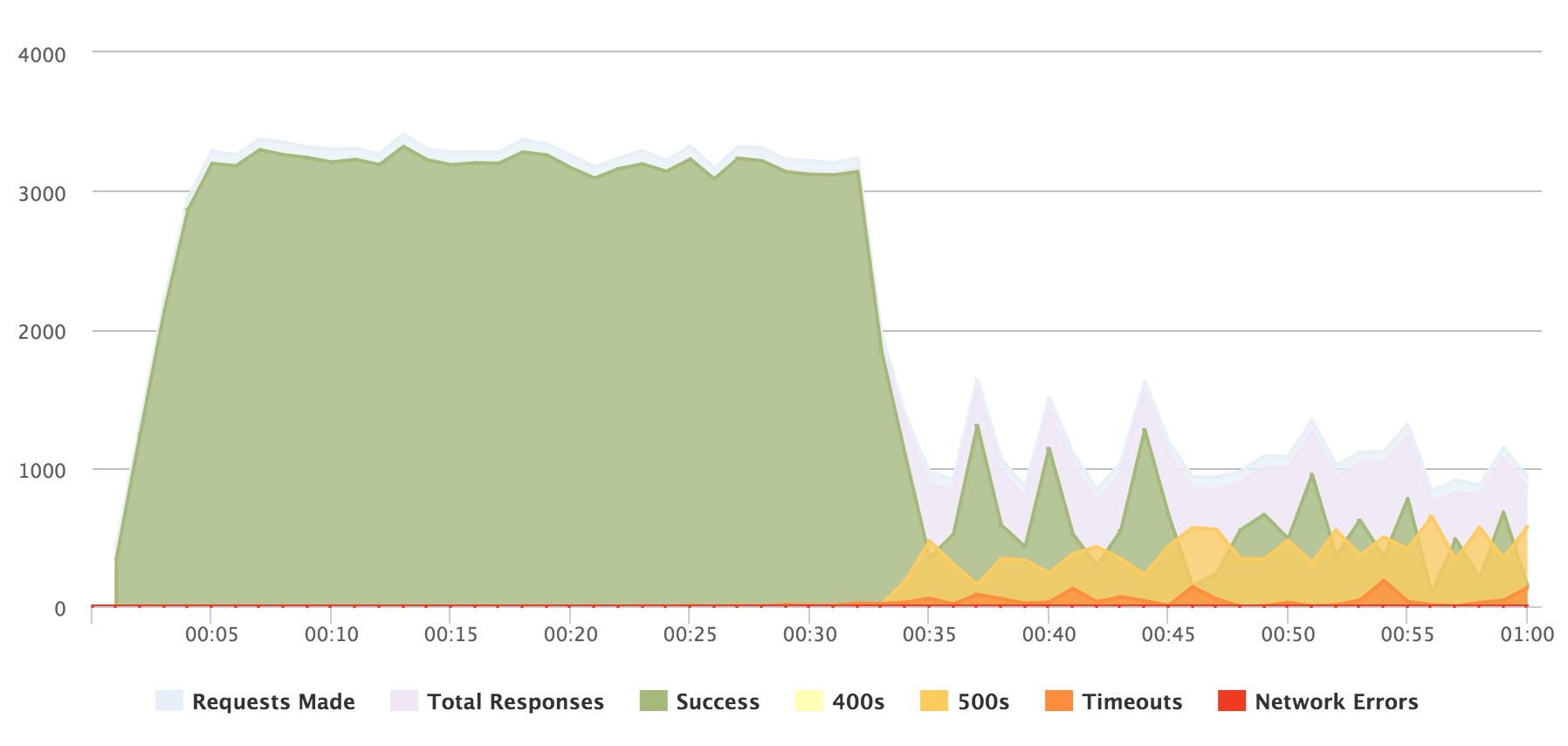

This time I tested both the index page with 10 posts and pagination (as I did in the previous benchmarks), and a single blog post. With plain HTTP, as seen in the graph below, I was able to get a consistent 3100–3300 requests per second from the index page. At somewhere around 3000 concurrent clients the server started to really buckle under the load, though, and many clients were served with a 50X error or a timeout. I consider this a pretty good result for a personal blog.

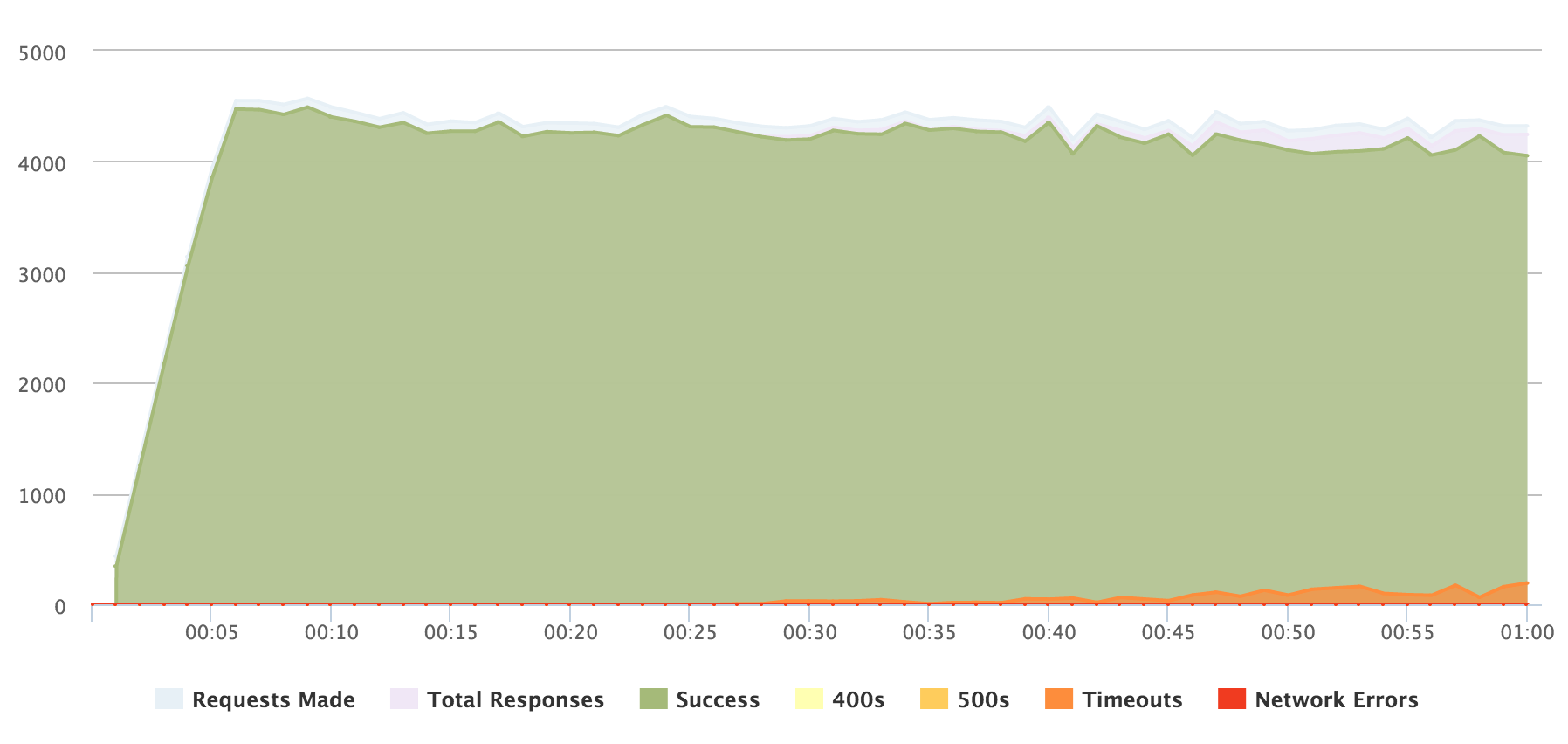

Serving a single post worked even better. Mebe was able to serve a maximum of around 4400 requests per second, consistently keeping up a speed of over 4000 requests per second for the test duration. Sadly I was not able to go further than this to see the breaking point, because the loader.io free tier only allows for up to 5000 concurrent clients.

HTTPS

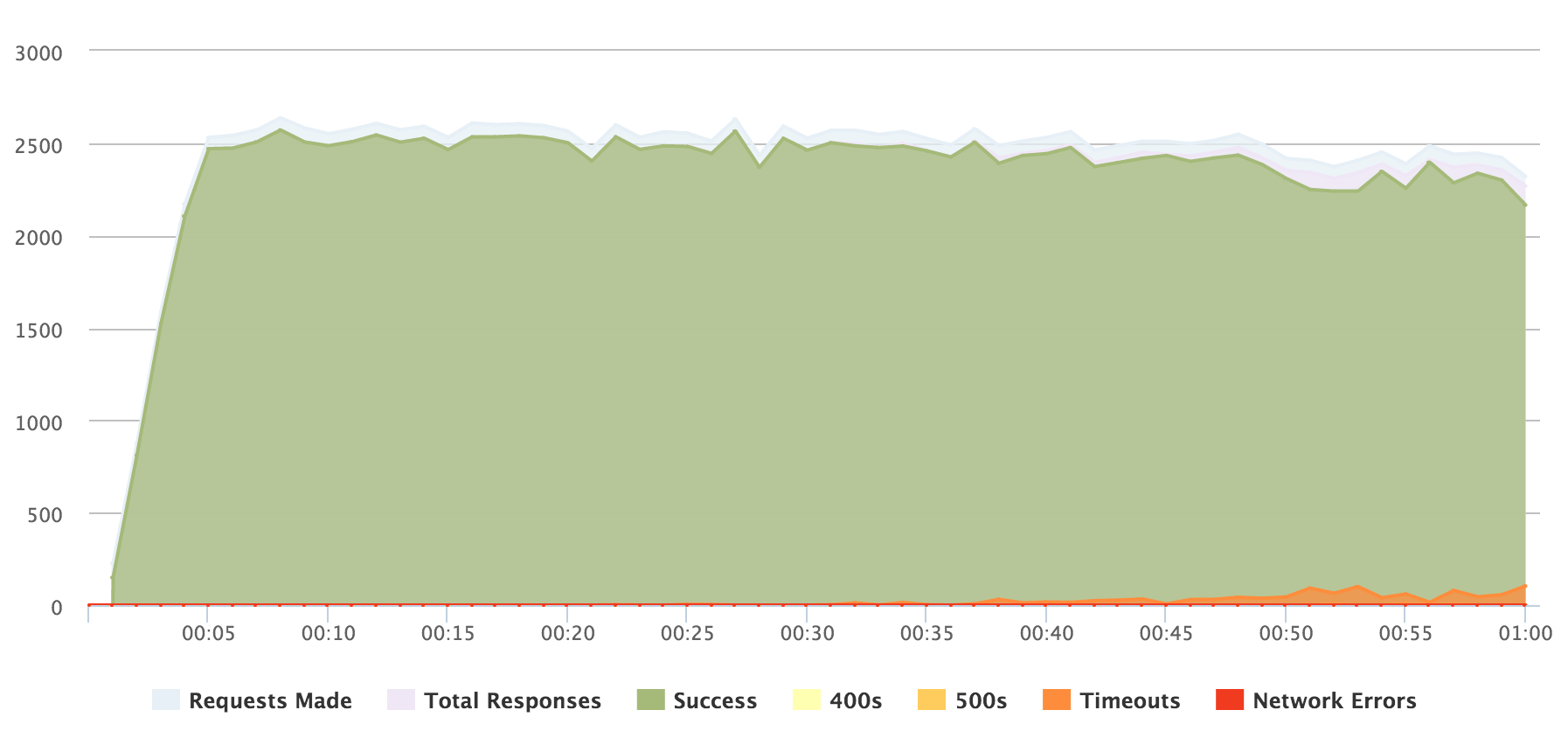

Here are the latest results I just measured today. Over HTTPS I would have expected a slight increase over the previously benchmarked 730 requests per second, maybe to something like 1000–1200. In reality, the blog's (and nginx's) performance blew past that estimate by a wide margin. The blog was able to serve the index page at 2200–2500 request per second with very few timeouts.

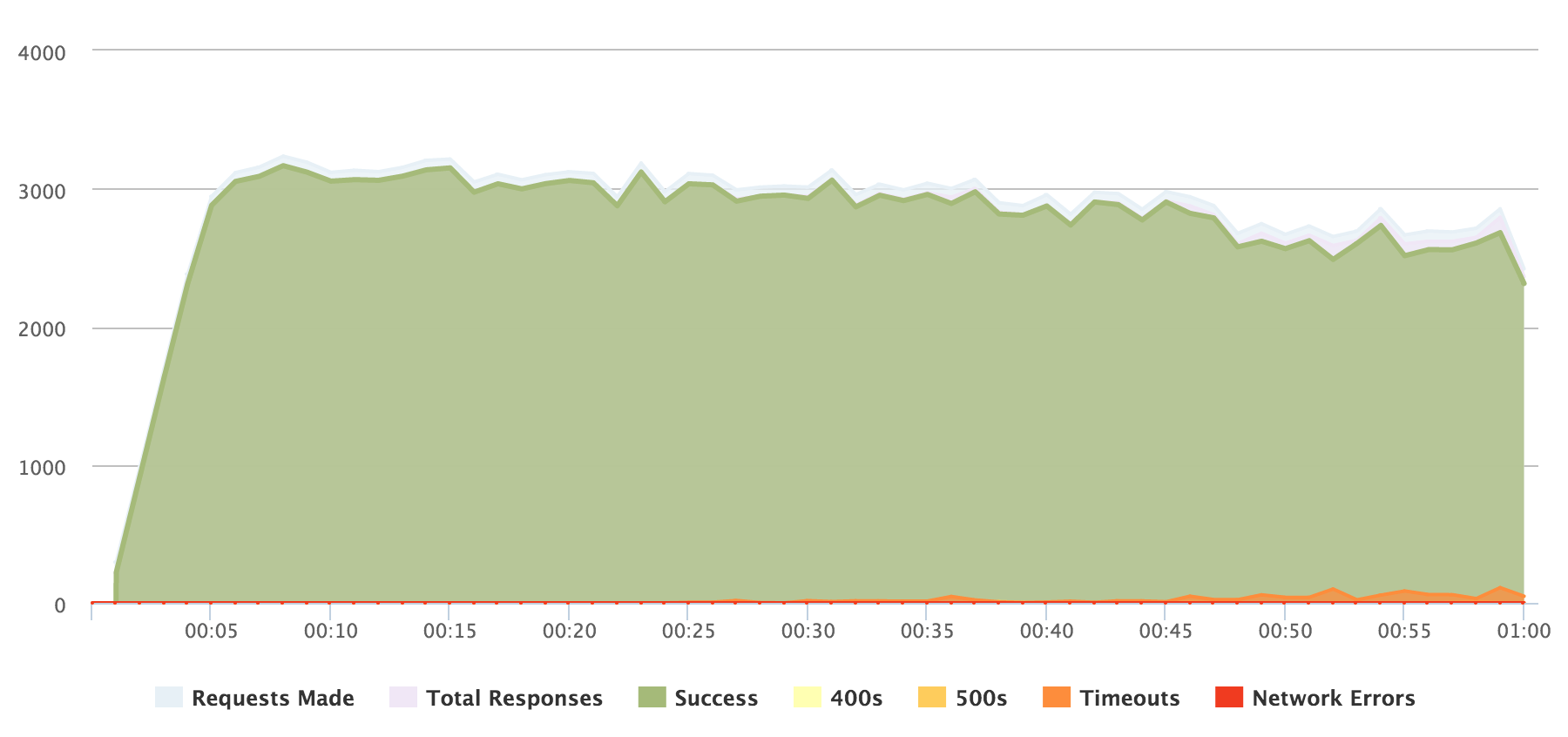

The single post, as expected, was faster. It started at just over 3000 request per second, settling to around 2500–2700 near the end of the test.

These numbers are way higher than I expected based on the last tests. You have to note that these were acquired without any use of caching, so with proper caching in nginx (or some other system), they could be improved a lot. Personally I'm very happy with them, but I won't be saying anything more to prevent having to eat my words again at some point in the future!