HTTPS Performance, 2048-bit vs 4096-bit

Posted on .

** UPDATE: ** I wrote a new post with newer and faster benchmarks.

After the Snowden revelations, I personally started looking more into encrypting my online activities and making sure sites that ran on my server were (relatively) secure. Eventually I put this blog behind HTTPS as well, not really for any security benefit, since I'm not talking government secrets and the blog has no admin panel, but rather for learning about TLS and how to set it up properly. Problem was, it seems I did not read about things properly. This blog post describes one result of that ignorance.

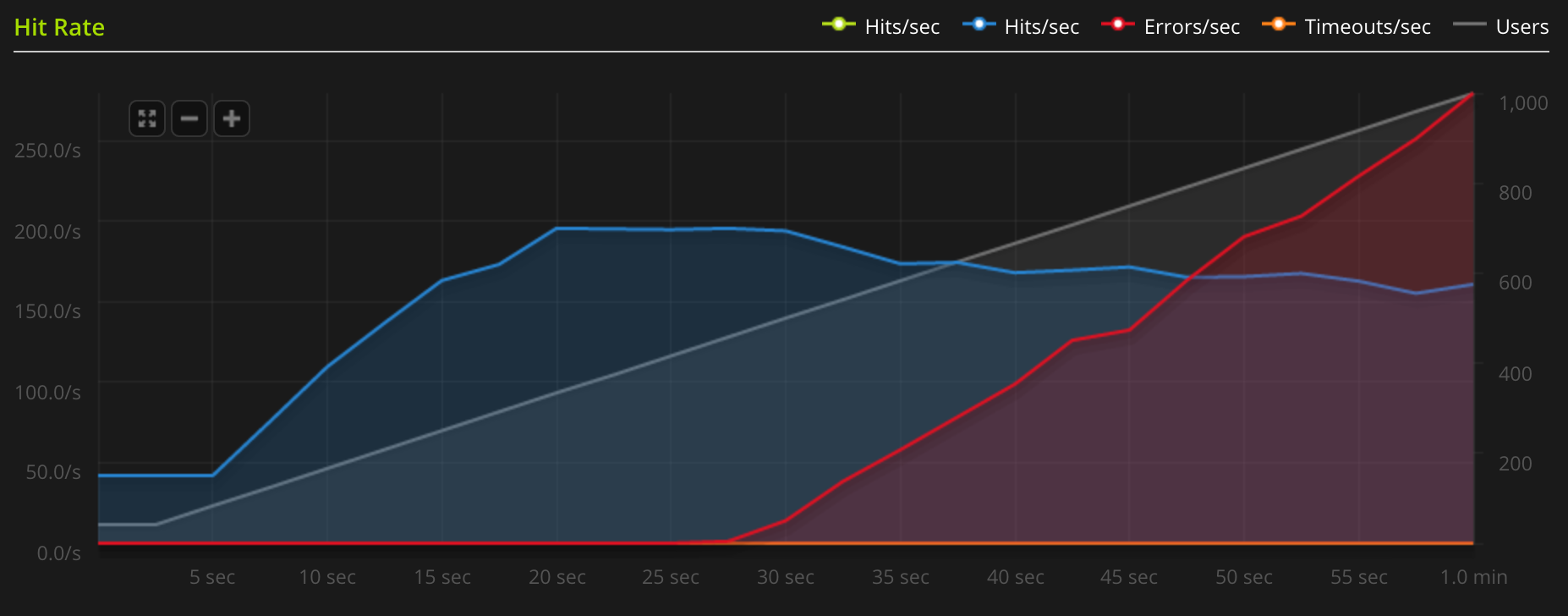

I sometimes like using blitz.io for load testing my server – they have a free plan with a small amount of test runs that I use. After setting up the new server, I decided to test my blog's speed. Much to my surprise, it did not handle the load well at all. As you can see from the graph below, the server capped just below 200 requests per second, at which point Nginx was using all cores at 100 %.

I was baffled. I had just gotten a new and shiny 8-core server, it was supposed to be fast! Where was my speed? I tried

changing some settings and googled around a bit, but didn't find any definitive answer. Later I happened to mention the

situation on the ever-so-helpful #elixir-lang on Freenode. A user called voltone gave a few helpful tips and then

linked me to a book on Nginx and TLS. I did not get the

book yet, but I read one of the previews and found the reason. From the book's preview, "SSL/TLS Deployment Best

Practices":

The cryptographic handshake, which is used to establish secure connections, is an operation whose cost is highly influenced by private key size. Using a key that is too short is insecure, but using a key that is too long will result in “too much” security and slow operation. For most web sites, using RSA keys stronger than 2048 bits and ECDSA keys stronger than 256 bits is a waste of CPU power and might impair user experience. Similarly, there is little benefit to increasing the strength of the ephemeral key exchange beyond 2048 bits for DHE and 256 bits for ECDHE.

I wondered how many bits I had in my HTTPS certificate's private key… turns out it was 4096. I quickly ran some numbers on my server:

[nicd@octane ~]$ openssl speed rsa2048 rsa4096

[cut irrelevant content]

sign verify sign/s verify/s

rsa 2048 bits 0.004742s 0.000142s 210.9 7024.4

rsa 4096 bits 0.034861s 0.000536s 28.7 1865.9

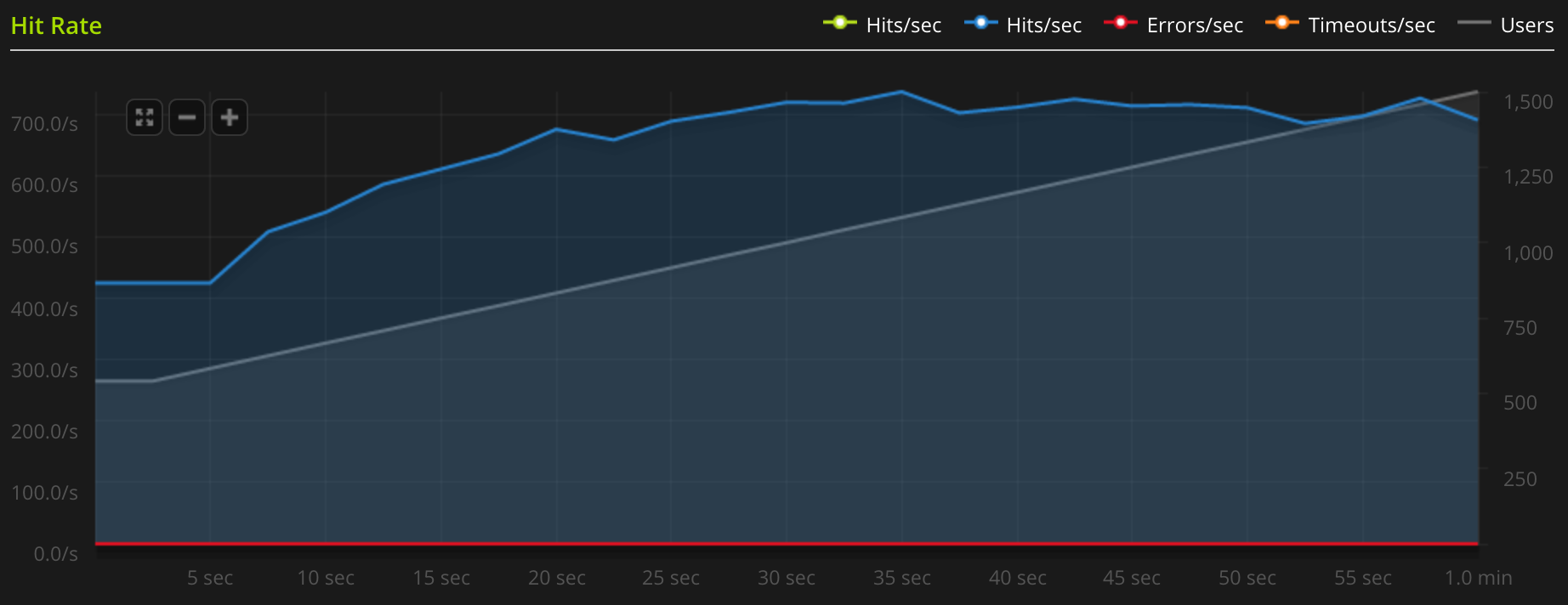

Here's the problem. As you can see from the results, it takes more than 7 times the CPU time to sign 4096-bit RSA keys compared to 2048-bit. Since 2048-bit keys are considered safe enough, I decided to see what performance gains I could get from changing to a 2048-bit certificate. Turns out the difference was quite big. As shown in the image below, with the new certificate set up in Nginx, I managed to squeeze out a maximum rate of around 730 requests per second. That means it's about 4 times as fast as before!

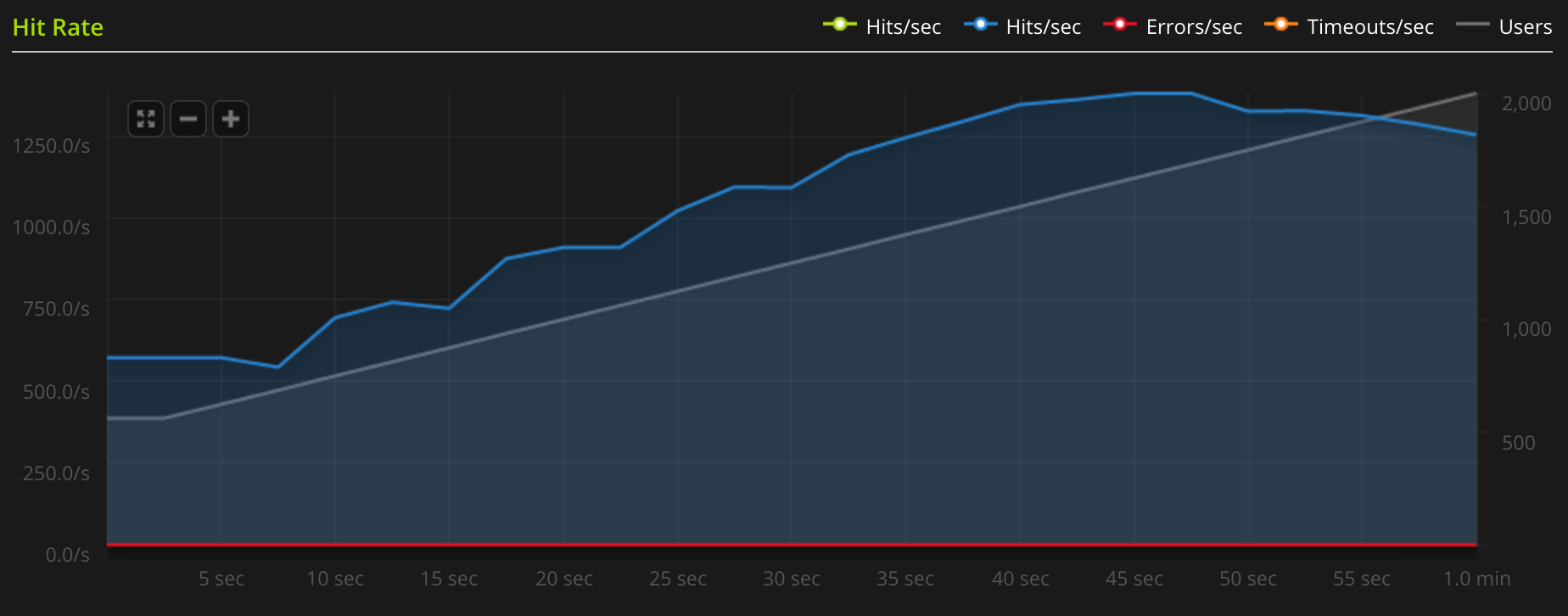

Just for reference, I also benchmarked the blog without any encryption. This time it seemed to peak at around 1380 requests per second. That shows that the performance impact of the encryption is still quite big, but a lot more manageable.

The moral of the story is: do your homework. When deploying encryption, don't do like I did and go "Wow, 4096! It must be twice as good as 2048!” That's not how any of this works. Instead read about how things work and what values are considered secure enough (and for what purposes). Then run benchmarks to see the performance impacts. There are no shortcuts if you want to do things The Right Way™.

PS.: At the time of writing the production version of this blog is still running with a 4096-bit key. That's because I cannot request a new one from StartSSL if I don't revoke the old one – and whereas the certificates themselves are free, revoking costs real money. Let's Encrypt can't come fast enough…